Enhancing Trust in Healthcare: The Role of AI Explainability and Professional Familiarity

DOI:

https://doi.org/10.62019/abbdm.v4i1.100Keywords:

Artificial Intelligence, Healthcare, Trust, AI Explainability, Professional Familiarity, Pakistan.Abstract

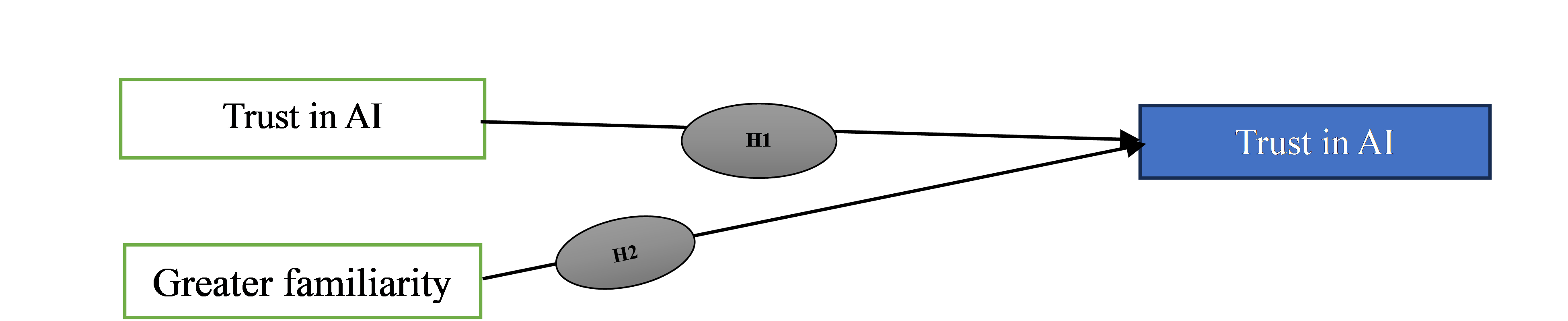

The integration of Artificial Intelligence (AI) in healthcare has been impeded by a significant issue: a lack of trust among healthcare professionals, stemming from the opacity of AI decision-making processes and a general unfamiliarity with AI technologies. This study investigates the impact of AI's explainability and healthcare professionals' familiarity with AI on their trust in AI applications within healthcare settings. Adopting a quantitative research methodology, the study utilized a structured questionnaire to gather data from a diverse group of healthcare professionals, including doctors, nurses, and administrators, across various hospitals and healthcare institutions in Pakistan. The research employed a stratified random sampling approach to ensure a comprehensive and representative data set. The results indicated a positive and significant relationship between AI explainability and trust in AI (Path Coefficient: 0.62, t-Value: 5.20), suggesting that clearer and more transparent AI decision-making processes enhance healthcare professionals' trust., Similarly, familiarity with AI was found to positively influence trust in AI (Path Coefficient: 0.48, t-Value: 4.35), highlighting the importance of exposure and understanding of AI systems among healthcare professionals. These findings have crucial implications for both AI developers and healthcare administrators. For AI developers, the emphasis must be on creating more transparent and interpretable AI systems. For healthcare administrators, the results suggest the need to invest in training and educational programs to increase professionals' familiarity with AI, thereby enhancing trust and acceptance. The study significantly contributes to the existing literature by empirically validating the importance of AI explainability and familiarity in building trust in AI within the healthcare context, especially in a developing country setting. For policymakers, these insights are critical in guiding strategies and policies aimed at effectively integrating AI into healthcare systems. By addressing the identified factors, healthcare sectors can better leverage AI's potential, leading to improved patient care and more efficient healthcare operations.

Downloads

Published

Issue

Section

License

Copyright (c) 2024 The Asian Bulletin of Big Data Management

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.